The techniques could potentially be applied to analyze other learning-based algorithms in the literature. This paper contains novel theoretical contributions to the area of learning-based algorithms in the sense that (i) PLISA is generically applicable to a broad class of sparse estimation problems, (ii) generalization analysis has received less attention so far, and (iii) our analysis makes novel connections between the generalization ability and algorithmic properties such as stability and convergence of the unrolled algorithm, which leads to a tighter bound that can explain the empirical observations. Other proposed methods consist of a projected gradient descent with.

Furthermore, we analyze the empirical Rademacher complexity of PLISA to characterize its generalization ability to solve new problems outside the training set. Sparse coding consists in representing signals as sparse linear combinations of. We theoretically show the improved recovery accuracy achievable by PLISA.

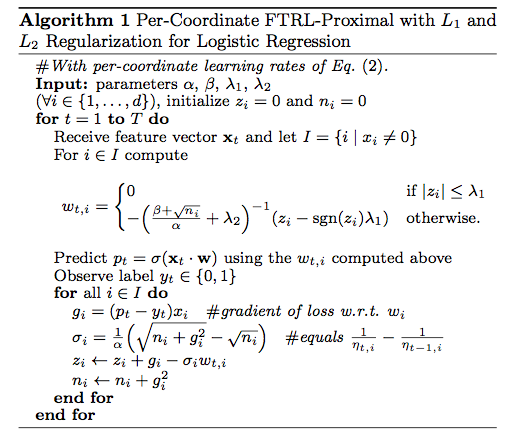

PLISA is designed by unrolling a classic path-following algorithm for sparse recovery, with some components being more flexible and learnable. The general proximal gradient algorithm is designed to solve the problem in. In this work, we propose a deep learning method for algorithm learning called PLISA (Provable Learning-based Iterative Sparse recovery Algorithm). The formulation of basis pursuit relax the linear constraint of P1 in the. convex L1-norm and nonconvex L0-norm for sparse vector recovery. This algorithm is employed to recover/synthesize a signal satisfying simultaneously several convex constraints. recovery compared with state-of-the-art convex algorithms. alternating split Bregman are special instances of proximal algorithms. Besides, hand-designed algorithms do not fully exploit the particular problem distribution of interest. Proximal gradient methods are a generalized form of projection used to solve non-differentiable convex optimization problems. Many classic algorithms can solve this problem with theoretical guarantees, but their performances rely on choosing the correct hyperparameters. Abstract: Recovering sparse parameters from observational data is a fundamental problem in machine learning with wide applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed